18.1 – Multiple Linear Regression

Introduction

Last time we introduced simple linear regression:

- one independent X variable

- one dependent Y variable.

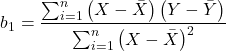

The linear relationship between Y and X was estimated by the method of Ordinary Least Squares (OLS). OLS minimizes the sum of squared distances between the observed responses, ![]() and responses predicted

and responses predicted ![]() by the line. Simple linear regression is analogous to our one-way ANOVA — one outcome or response variable and one factor or predictor variable (Chapter 12.2).

by the line. Simple linear regression is analogous to our one-way ANOVA — one outcome or response variable and one factor or predictor variable (Chapter 12.2).

But the world is complicated and so, our one-way ANOVA was extended to the more general case of two or more predictor (factor) variables (Chapter 14). As you might have guessed by now, we can extend simple regression to include more than one predictor variable. In fact, combining ANOVA and regression gives you the general linear model! And, you should not be surprised that statistics has extended this logic to include not only multiple predictor variables, but also multiple response variables. Multiple response variables falls into a category of statistics called multivariate statistics.

Like multi-way ANOVA, multiple regression is the extension of simple linear regression from one independent predictor variable to include two or more predictors. The benefit of this extension is obvious — our models gain realism. All else being equal, the more predictors, the better the model will be at describing and/or predicting the response. Things are not all equal, of course, and we’ll consider two complications of this basic premise, that more predictors are best; in some cases they are not.

However, before discussing the exceptions or even the complications of a multiple linear regression model, we begin by obtaining estimates of the full model, then introduce aspects of how to evaluate the model. We also introduce and whether a reduced model may be the preferred model.

R code

Multiple regression is easy to do in Rcmdr — recall that we used the general linear model function, lm(), to analyze one-way ANOVA and simple linear regression. In R Commander, we access lm() by

Rcmdr: Statistics → Fit model → Linear model

You may, however, access linear regression through R Commander

We use the same general linear model function for cases of multi-way ANOVA and for multiple regression problems. Simply enter more than one ratio-scale predictor variable and boom!

You now have yourself a multiple regression. You would then proceed to generate the ANOVA table for hypothesis testing

Rcmdr: Models → Hypothesis testing → ANOVA tables

From the output of the regression command, estimates of the coefficients along with standard errors for the estimate and results of t-tests for each coefficient against the respective null hypotheses for each coefficient are also provided. In our discussion of simple linear regression we introduced the components: the intercept, the slope, as well as the concept of model fit, as evidenced by R2, the coefficient of determination. These components exist for the multiple regression problem, too, but now we call the slopes partial regression slopes because there are more than one.

Our full multiple regression model becomes

![]()

where the coefficients β1, β2, … βn are the partial regression slopes and β0 is the Y-intercept for a model with 1 – n predictor variables. Each coefficient has a null hypothesis, each has a standard error, and therefore, each coefficient can be tested by t-test.

Now, regression, like ANOVA, is an enormous subject and we cannot do it justice in the few days we will devote to it. We can, however, walk you through a fairly typical example. I’ve posted a small data set diabetesCholStatin at the end of this page. Scroll down or click here. View the data set and complete your basic data exploration routine: make scatterplots and box plots. We think (predict) that body size and drug dose cause variation in serum cholesterol levels in adult men. But do both predict cholesterol levels?

Selecting the best model

We have two predictor variables, and we can start to see whether none, one, or both of the predictors contribute to differences in cholesterol levels. In this case, both contribute significantly. The power of multiple regression approaches is that it provides a simultaneous test of a model which may have many explanatory variables deemed appropriate to describe a particular response. More generally, it is sometimes advisable to think more philosophically about how to select a best model.

In model selection, some would invoke Occam’s razor — given a set of explanations, the simplest should be selected — to justify seeking simpler models. There are a number of approaches (forward selection, backward selection, or stepwise selection), and the whole effort of deciding among competing models is complicated with a number of different assumptions, strengths and weaknesses. I refer you to the discussion below, which of course is just a very brief introduction to a very large subject in (bio)statistics!

Let’s get the full regression model

The statistical model is

![]()

As written in R format, our model is ChLDL ~ BMI + Dose + Statin.

Note 1. BMI is ratio scale and Statin is categorical (two levels: Statin1, Statin2). Dose can be viewed as categorical, with five levels (5, 10, 20, 40, 80 mg), interval scale, or ratio scale. If we are make the assumption that the difference between 5, 10, up to 80 is meaningful, and that the effect of dose is at least proportional if not linear with respect to ChLDL, then we would treat Dose as ratio scale, not interval scale. That’s what we did here.

We can now proceed in R Commander to fit the model.

Rmdr: Statistics → Fit models → Linear model

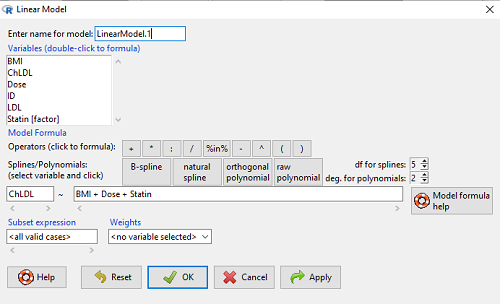

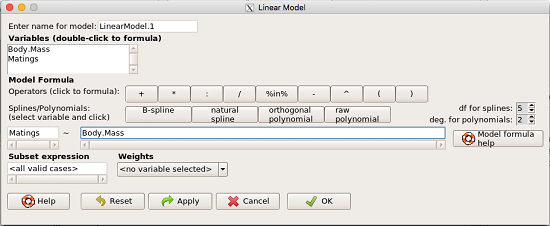

How the model is inputted into linear model menu is shown in Figure 1.

Figure 1. Screenshot of Rcmdr linear model menu with our model elements in place.

The output

summary(LinearModel.1)

Call:

lm(formula = ChLDL ~ BMI + Dose + Statin, data = cholStatins)

Residuals:

Min 1Q Median 3Q Max

-3.7756 -0.5147 -0.0449 0.5038 4.3821

Coefficients:

Estimate Std. Error t value Pr(>|t|)

(Intercept) 1.016715 1.178430 0.863 0.39041

BMI 0.058078 0.047012 1.235 0.21970

Dose -0.014197 0.004829 -2.940 0.00411 **

Statin[Statin2] 0.514526 0.262127 1.963 0.05255 .

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

Residual standard error: 1.31 on 96 degrees of freedom

Multiple R-squared: 0.1231, Adjusted R-squared: 0.09565

F-statistic: 4.49 on 3 and 96 DF, p-value: 0.005407

Question. What are the estimates of the model coefficients (rounded)?

= intercept = 1.017

= intercept = 1.017 = slope for variable BMI = 0.058

= slope for variable BMI = 0.058 = slope for variable Dose = -0.014

= slope for variable Dose = -0.014 = slope for variable Statin = -0.515

= slope for variable Statin = -0.515

Question. Which of the three coefficients were statistically different from their null hypothesis (p-value < 5%)?

Answer: Only ![]() coefficient was judged statistically significant at the Type I error level of 5% (p = 0.0041). Of the four null hypotheses we have for the coefficients:

coefficient was judged statistically significant at the Type I error level of 5% (p = 0.0041). Of the four null hypotheses we have for the coefficients:

= 0

= 0 = 0

= 0 = 0; we only reject the null hypothesis for

= 0; we only reject the null hypothesis for Dosecoefficient. = 0)

= 0)

The important take-home concept is about the lack of a direct relationship between the magnitude of the estimate of the coefficient and the likelihood that it will be statistically significant! In absolute value terms ![]() , but

, but ![]() was not close to statistical significance (p = 0.220).

was not close to statistical significance (p = 0.220).

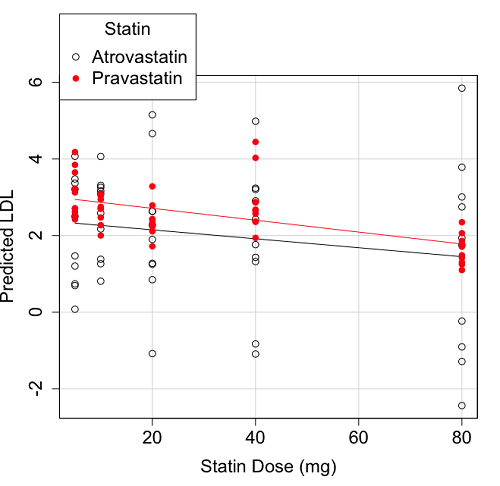

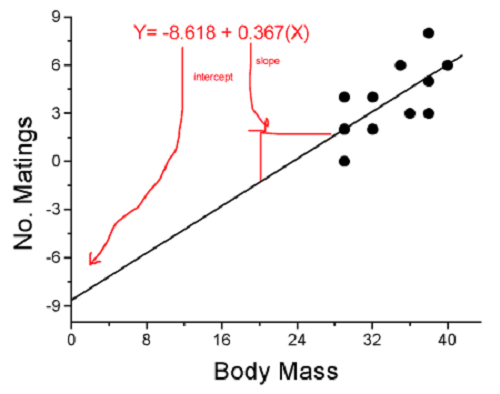

We generate a 2D scatterplot and include the regression lines (by group=Statin) to convey the relationship between at least one of the predictors (Fig 2).

Figure 2. Scatter plot of predicted LDL against dose of a statin drug. Regression lines represent the different statin drugs (Statin1, Statin2).

Question. Based on the graph, can you explain why there will be no statistical differences between levels of the statin drug type, Statin1 (shown open circles) vs. Statin2 (shown closed red circles).

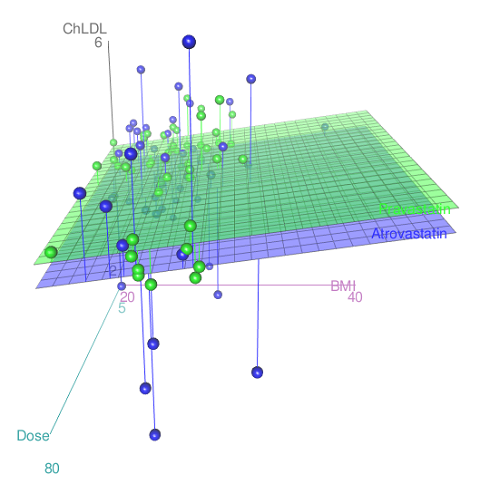

Because we have two predictors (BMI and Statin Dose), you may also elect to use a 3D-scatterplot. Here’s one possible result (Fig 3).

Figure 3. 3D plot of BMI and dose of Statin drugs on change in LDL levels (green Statin2, blue Statin1).

R code for Figure 3.

Graph made in Rcmdr: Graphs → 3D Graph → 3D scatterplot …

scatter3d(ChLDL~BMI+Dose|Statin, data=diabetesCholStatin, fit="linear", residuals=TRUE, parallel=FALSE, bg="white", axis.scales=TRUE, grid=TRUE, ellipsoid=FALSE)

Note 2: Figure 3 is a challenging graphic to interpret. I wouldn’t use it because it doesn’t convey a strong message. With some effort we can see the two planes representing mean differences between the two statin drugs across all predictors, but it’s a stretch. No doubt the graph can be improved by changing colors, for example, but I think the 2d plot Figure 2 works better). Alternatively, if the platform allows, you can use animation options to help your reader see the graph elements. Interactive graphics are very promising and, again, unsurprisingly, there are several R packages available. For this example, plot3d() of the package rgl can be used. Figure 4 is one possible version; I saved images and made animated gif.

Figure 4. An example of a possible interactive 3D plot; the file embedded in this page is not interactive, just an animation.

Diagnostic plots

While visualization concerns are important, let’s return to the statistics. All evaluations of regression equations should involve an inspection of the residuals. Inspection of the residuals allows you to decide if the regression fits the data; if the fit is adequate, you then proceed to evaluate the statistical significance of the coefficients.

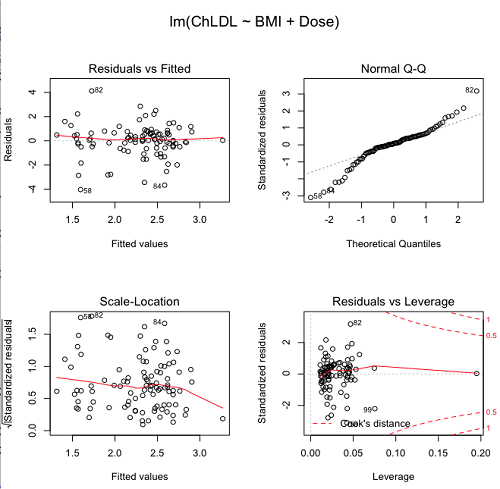

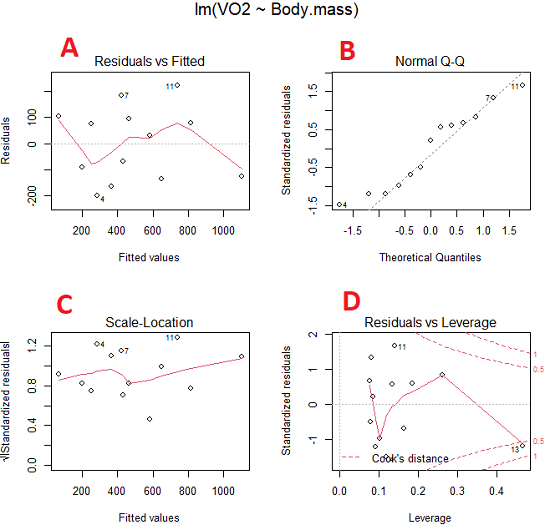

The default diagnostic plots (Fig 5) R provides are available from

Rcmdr: Models → Graphs → Basic diagnostics plots

Four plots are returned

Figure 5. R’s default regression diagnostic plots.

Each of these diagnostic plots in Figure 4 gives you clues about the model fit.

- Plot of residuals vs. fitted helps you identify patterns in the residuals

- Normal Q-Q plot helps you to see if the residuals are approximately normally distributed

- Scale-location plot provides a view of the spread of the residuals

- The residuals vs. leverage plot allows you to identify influential data points.

We introduced these plots in Chapter 17.8 when we discussed fit of simple linear model to data. My conclusion? No obvious trend in residuals, so linear regression is a fit to the data; data not normally distributed Q-Q plot shows S-shape.

Interpreting the diagnostic plots for this problem

The “Normal Q-Q” plot allows us to view our residuals against a normal distribution (the dotted line). Our residuals do no show an ideal distribution: low for the first quartile, about on the line for intermediate values, then high for the 3rd and 4th quartile residuals. If the data were bivariate normal we would see the data fall along a straight line. The “S-shape” suggests log-transformation of the response and or one or more of the predictor variables.

There also seems to be a pattern in residuals vs the predicted (fitted) values. There is a trend of increasing residuals as cholesterol levels increase, which is particularly evident in the “scale-location” plot. Residuals tended to be positive at low and high doses, but negative at intermediate doses. This suggests that the relationship between predictors and cholesterol levels may not be linear, and it demonstrates what statisticians refer to as a monotonic spread of residuals.

The last diagnostic plot looks for individual points that influence, change, or “leverage” the regression — in other words, if a point is removed, does the general pattern change? If so, then the point had “leverage” and thus we need to decide whether or not to include the datum. diagnostic plots Cook’s distance is a measure of the influence of a point in regression. Points with large Cook’s distance values warrant additional checking.

The multicollinearity problem

Statistical model building is a balancing act by the statistician. While simpler models may be easier to interpret and, perhaps, to use, it is a basic truism that the more predictor variables the model includes, the more realistic the statistical model. However, each additional parameter that is added to the statistical model must be independent of all other parameters already in the model. To the extent that this assumption is violated, the problem is termed multicollinearity. If predictor variables are highly correlated, then they are essentially just linear combinations and do not provide independent evidence. For example, one would naturally not include two core body temperature variables in a statistical model on basal metabolic rate, one in degrees Fahrenheit and the other in degrees Celsius, because it is a simple linear conversion between the two units. This would be an example of structural collinearity: the collinearity is because of misspecification of the model variables. In contrast, collinearity among predictor variables may because the data are themselves correlated. For example, if multiple measures of body size are included: weight, height, length of arm, etc., then we would expect these to be correlated, ie, data multicollinearity.

Collinearity in statistical models may have a number of undesirable effects on a multiple regression model. These include

- estimates of coefficients not stable: with collinearity, values of coefficients depend on other variables in the model; if collinear predictors, then the assumption of independent predictor variables is violated.

- precision of the estimates decreases (standard error of estimates increase).

- statistical power decreases.

- p-values for individual coefficients not trust worthy.

Tolerance and Variance Inflation Factor

Absence of multicollinearity is important assumption of multiple regression. A partial test is to calculate product moment correlations among predictor variables. For example, when we calculate the correlation between BMI and Dose for our model, we get r = 0.101 (P = 0.3186), and therefore would tentatively conclude that there was little correlation between our predictor variables.

A number of diagnostic statistics have been developed to test for multicollinearity. Tolerance for a particular independent variable (Xi) is defined as 1 minus the proportion of variance it shares with the other independent variables in the regression analysis (1 − R2i) (O’Brien 2007). Tolerance reports the proportion of total variance explained by adding the Xi–th predictor variable that is unrelated to the other variables in the model. A small value for tolerance indicates multicollinearity — and that the predictor variable is nearly a perfect combination (linear) of the variables already in the model and therefore should be omitted from the model. Because tolerance is defined in relation to the coefficient of determination you can interpret a tolerance score as the unique variance accounted for by a predictor variable.

A second, related diagnostic of multicollinearity is called the Variance Inflation Factor, VIF. VIF is the inverse of tolerance.

![]()

VIF shows how much of the variance of a regression coefficient is increased because of collinearity with the other predictor variables in the model. VIF is easy to interpret: a tolerance of 0.01 has a VIF of 100; a tolerance of 0.1 has a VIF of 10; a tolerance of 0.5 has a VIF of 2, and so on. Thus, small values of tolerance and large values of VIF are taken as evidence of multicollinearity.

Rcmdr: Models → Numerical diagnostics → Variation-inflation factors

vif(RegModel.2) BMI Dose 1.010256 1.010256

A rule of thumb is that if VIF is greater than 5 then there is multicollinearity; with VIF values close to one we would conclude, like our results from the partial correlation estimate above, that there is little evidence for a problem of collinearity between the two predictor variables. They can therefore remain in the model.

Solutions for multicollinearity

If there is substantial multicollinearity then you cannot simply trust the estimates of the coefficients. Assuming that there hasn’t been some kind of coding error on your part, then you may need to find a solution. One solution is to drop one of the predictor variables and redo the regression model. Another option is to run what is called a Principal Components Regression (PCR). One takes the predictor variables and runs a Principal Component Analysis to reduce the number of variables, then the regression is run on the PCA components. By definition, the PCA components are independent of each other. Another option is to use ridge regression approach.

Note 3. Principal Component Analysis (PCA) is a dimensionality reduction technique that finds new uncorrelated variables (principal components) by maximizing variance. Principal Component Regression (PCR) uses PCA as a preprocessing step to create a regression model. The key difference is that PCA is an unsupervised method for data transformation, whereas PCR is a supervised regression technique that uses the components from PCA to predict an outcome. It’s probably not a great idea to use the acronym PCR in our biology reports; easily confused with polymerase chain reaction

Like any diagnostic rule, however, one should not blindly apply a rule of thumb. A VIF of 10 or more may indicate multicollinearity, but it does not necessarily lead to the conclusion that the linear regression model requires that the researcher reduce the number of predictor variables or analyze the problem using a different statistical method to address multicollinearity as the sole criteria of a poor statistical model. Rather, the researcher needs to address all of the other issues about model and parameter estimate stability, including sample size. Unless the collinearity is extreme (like a correlation of 1.0 between predictor variables!), larger sample sizes alone will work in favor of better model stability (by lowering the sample error) (O’Brien 2007).

Questions

- Write up three learning outcomes for this page. Hint: Point your favorite generative AI to this page and ask for help.

- Please explain why the magnitude of the slope is not the key to statistical significance of a slope? Hint: look at the equation of the t-test for statistical significance of the slope.

- Consider the following scenario. A researcher measures his subjects multiple times for blood pressure over several weeks, then plots all of the values over time. In all, the data set consists of thousands of readings. He then proceeds to develop a model to explain blood pressure changes over time. What kind of collinearity is present in his data set? Explain your choice.

- We noted that

Dosecould be viewed as categorical variable. ConvertDoseto factor variable (fDose) and redo the linear model. Compare the summary output and discuss the additional coefficients.- Use Rcmdr: Data → Manage variables in active data set → Convert numeric Variables to Factors to create a new factor variable

fDose. It’s ok to use the numbers as factor levels.

- Use Rcmdr: Data → Manage variables in active data set → Convert numeric Variables to Factors to create a new factor variable

- We flagged the change in LDL as likely to be not normally distributed. Create a log10-transformed variable for

ChLDLand perform the multiple regression again.- Write the new statistical model

- Obtain the regression coefficients — are they statistically significant?

- Run basic diagnostic plots and evaluate for

Quiz Chapter 18.1

Multiple Linear Regression

Data set

| ID | Statin | Dose | BMI | LDL | ChLDL |

|---|---|---|---|---|---|

| 1 | Statin2 | 5 | 19.5 | 3.497 | 2.714778 |

| 2 | Statin1 | 20 | 20.2 | 4.268 | 1.276483 |

| 3 | Statin2 | 40 | 20.3 | 3.989 | 2.677377 |

| 4 | Statin2 | 20 | 20.3 | 3.502 | 2.430618 |

| 5 | Statin2 | 80 | 20.4 | 3.766 | 1.79463 |

| 6 | Statin2 | 20 | 20.6 | 3.44 | 2.234295 |

| 7 | Statin1 | 20 | 20.7 | 3.414 | 2.635305 |

| 8 | Statin1 | 10 | 20.8 | 3.222 | 0.809181 |

| 9 | Statin1 | 10 | 21.1 | 4.04 | 3.259599 |

| 10 | Statin1 | 40 | 21.2 | 4.429 | 1.763997 |

| 11 | Statin1 | 5 | 21.2 | 3.528 | 3.369377 |

| 12 | Statin1 | 40 | 21.5 | 3.01 | -0.827154 |

| 13 | Statin2 | 20 | 21.6 | 3.393 | 2.11172 |

| 14 | Statin1 | 10 | 21.7 | 4.512 | 3.166238 |

| 15 | Statin1 | 80 | 22 | 5.449 | 3.00833 |

| 16 | Statin2 | 10 | 22.2 | 4.03 | 3.05013 |

| 17 | Statin2 | 40 | 22.2 | 3.911 | 2.646034 |

| 18 | Statin2 | 10 | 22.2 | 3.724 | 2.945656 |

| 19 | Statin1 | 5 | 22.2 | 3.238 | 3.209584 |

| 20 | Statin2 | 10 | 22.5 | 4.123 | 3.088763 |

| 21 | Statin1 | 20 | 22.6 | 3.859 | 5.152548 |

| 22 | Statin1 | 10 | 23 | 4.926 | 2.58483 |

| 23 | Statin2 | 20 | 23 | 3.512 | 2.291975 |

| 24 | Statin1 | 5 | 23 | 3.838 | 1.469 |

| 25 | Statin2 | 20 | 23.1 | 3.548 | 2.34079 |

| 26 | Statin1 | 5 | 23.1 | 3.424 | 1.204346 |

| 27 | Statin1 | 40 | 23.2 | 3.709 | 3.238179 |

| 28 | Statin1 | 80 | 23.2 | 4.786 | 2.748643 |

| 29 | Statin1 | 20 | 23.3 | 4.103 | 1.250082 |

| 30 | Statin1 | 40 | 23.4 | 3.341 | 1.432292 |

| 31 | Statin1 | 10 | 23.5 | 3.828 | 1.381755 |

| 32 | Statin2 | 10 | 23.8 | 4.02 | 3.039187 |

| 33 | Statin1 | 20 | 23.8 | 3.942 | 0.848328 |

| 34 | Statin2 | 20 | 23.8 | 2.89 | 1.721163 |

| 35 | Statin1 | 80 | 23.9 | 3.326 | 1.939346 |

| 36 | Statin1 | 10 | 24.1 | 4.071 | 3.090741 |

| 37 | Statin1 | 40 | 24.1 | 4.222 | 1.322305 |

| 38 | Statin2 | 10 | 24.1 | 3.44 | 2.472223 |

| 39 | Statin1 | 5 | 24.2 | 3.507 | 0.076817 |

| 40 | Statin2 | 20 | 24.2 | 3.647 | 2.425758 |

| 41 | Statin2 | 80 | 24.3 | 3.812 | 1.710575 |

| 42 | Statin2 | 40 | 24.3 | 3.305 | 1.940572 |

| 43 | Statin2 | 5 | 24.3 | 3.455 | 2.502214 |

| 44 | Statin2 | 5 | 24.4 | 4.258 | 3.228008 |

| 45 | Statin1 | 5 | 24.4 | 4.16 | 3.477747 |

| 46 | Statin2 | 80 | 24.4 | 4.128 | 2.063247 |

| 47 | Statin1 | 80 | 24.5 | 4.507 | 3.784422 |

| 48 | Statin1 | 5 | 24.5 | 3.553 | 0.695709 |

| 49 | Statin2 | 10 | 24.5 | 3.616 | 2.69987 |

| 50 | Statin2 | 80 | 24.6 | 3.372 | 1.300401 |

| 51 | Statin2 | 80 | 24.6 | 3.667 | 1.418109 |

| 52 | Statin2 | 5 | 24.7 | 3.854 | 3.126671 |

| 53 | Statin1 | 80 | 24.7 | 3.32 | -1.286439 |

| 54 | Statin2 | 5 | 24.7 | 3.756 | 2.423664 |

| 55 | Statin1 | 40 | 24.8 | 4.398 | 2.907473 |

| 56 | Statin2 | 40 | 24.9 | 3.621 | 2.362429 |

| 57 | Statin1 | 10 | 25 | 3.17 | 1.264656 |

| 58 | Statin1 | 80 | 25.1 | 3.424 | -2.436908 |

| 59 | Statin2 | 10 | 25.1 | 3.196 | 2.001465 |

| 60 | Statin2 | 80 | 25.2 | 3.367 | 1.100704 |

| 61 | Statin1 | 80 | 25.2 | 3.067 | -0.23154 |

| 62 | Statin1 | 20 | 25.3 | 3.678 | 4.662866 |

| 63 | Statin2 | 5 | 25.5 | 4.077 | 2.611705 |

| 64 | Statin1 | 20 | 25.5 | 3.678 | 2.633053 |

| 65 | Statin2 | 5 | 25.6 | 4.994 | 4.180082 |

| 66 | Statin1 | 20 | 25.8 | 3.699 | 1.899031 |

| 67 | Statin1 | 10 | 25.9 | 3.507 | 4.063757 |

| 68 | Statin2 | 20 | 25.9 | 3.445 | 2.303761 |

| 69 | Statin1 | 5 | 26 | 4.025 | 2.501427 |

| 70 | Statin1 | 5 | 26.3 | 3.616 | 0.740863 |

| 71 | Statin2 | 40 | 26.4 | 3.937 | 2.573321 |

| 72 | Statin2 | 40 | 26.4 | 3.823 | 2.363839 |

| 73 | Statin1 | 10 | 26.7 | 4.46 | 2.174198 |

| 74 | Statin2 | 5 | 26.7 | 5.03 | 3.845271 |

| 75 | Statin2 | 10 | 26.7 | 3.73 | 2.708896 |

| 76 | Statin2 | 10 | 26.7 | 3.232 | 2.272627 |

| 77 | Statin1 | 80 | 26.8 | 3.693 | 1.751169 |

| 78 | Statin2 | 80 | 27 | 4.108 | 1.86131 |

| 79 | Statin2 | 40 | 27.2 | 5.398 | 4.028977 |

| 80 | Statin2 | 80 | 27.2 | 4.517 | 2.348903 |

| 81 | Statin2 | 20 | 27.3 | 3.901 | 2.790047 |

| 82 | Statin1 | 80 | 27.3 | 5.247 | 5.848545 |

| 83 | Statin2 | 80 | 27.4 | 3.507 | 1.247863 |

| 84 | Statin1 | 20 | 27.4 | 3.807 | -1.079928 |

| 85 | Statin2 | 80 | 27.6 | 3.574 | 1.486789 |

| 86 | Statin1 | 40 | 27.8 | 4.16 | 2.427753 |

| 87 | Statin2 | 20 | 28 | 4.501 | 3.284648 |

| 88 | Statin2 | 5 | 28.1 | 3.621 | 2.699007 |

| 89 | Statin1 | 40 | 28.2 | 3.652 | -1.091256 |

| 90 | Statin2 | 40 | 28.2 | 4.191 | 2.874231 |

| 91 | Statin2 | 40 | 28.4 | 5.791 | 4.445454 |

| 92 | Statin1 | 40 | 28.6 | 4.698 | 3.202874 |

| 93 | Statin1 | 5 | 29 | 4.32 | 4.070753 |

| 94 | Statin2 | 10 | 29.1 | 3.776 | 2.751281 |

| 95 | Statin2 | 5 | 29.2 | 4.703 | 3.64949 |

| 96 | Statin2 | 40 | 29.9 | 4.128 | 2.864691 |

| 97 | Statin1 | 40 | 30.4 | 4.693 | 4.983704 |

| 98 | Statin1 | 20 | 30.4 | 4.123 | 2.273898 |

| 99 | Statin1 | 80 | 30.5 | 3.921 | -0.903438 |

| 100 | Statin1 | 10 | 36.5 | 4.175 | 3.311437 |

Chapter 18 contents

17.8 – Assumptions and model diagnostics for Simple Linear Regression

Introduction

The assumptions for all linear regression:

- Linear model is appropriate.

The data are well described (fit) by a linear model. - Independent values of Y and equal variances.

Although there can be more than one Y for any value of X, the Y‘s cannot be related to each other (that’s what we mean by independent). Since we allow for multiple Y‘s for each X, then we assume that the variances of the range of Y‘s are equal for each X value (this is similar to our ANOVA assumptions for equal variance by groups). Another term for equal variances is homoscedasticity. - Normality.

For each X value there is a normal distribution of Y‘s (think of doing the experiment over and over). - Error

The residuals (error) are normally distributed with a mean of zero.

Note the mnemonic device: Linear, Independent, Normal, Error or LINE.

Each of the four elements will be discussed below in the context of Model Diagnostics. These assumptions apply to how the model fits the data. There are other assumptions that, if violated, imply you should use a different method for estimating the parameters of the model.

Ordinary least squares makes the additional assumption about the quality of the independent variable that e that measurement of X is done without error. Measurement error is a fact of life in science, but the influence of error on regression differs if the error is associated with the dependent or independent variable. Measurement error in the dependent variable increases the dispersion of the residuals but will not affect the estimates of the coefficients; error associated with the independent variables, however, will affect estimates of the slope. In short, error in X leads to biased estimates of the slope.

The equivalent, but less restrictive practical application of this assumption is that the error in X is at least negligible compared to the measurements in the dependent variable.

Multiple regression makes one more assumption, about the relationship between the predictor variables (the X variables). The assumption is that there is no multicollinearity, a subject we will bring up next time (see Chapter 18).

Model diagnostics

We just reviewed how to evaluate the estimates of the coefficients of the model. Now we need to address a deeper meaning — how well the model explains the data. Consider a simple linear regression first. If Ho: b = 0 is not rejected — then the slope of the regression equation is taken to not differ from zero. We would conclude that if repeated samples were drawn from the population, on average, the regression equation would not fit the data well (lots of scatter) and it would not yield useful prediction.

However, recall that we assume that the fit is linear. One assumption we make in regression is that a line can, in fact, be used to describe the relationship between X and Y.

Here are two very different situations where the slope = 0.

Example 1. Linear Slope = 0, No relationship between X and Y

Example 2. Linear Slope = 0, A significant relationship between X and Y

But even if Ho: b = 0 is rejected (and we conclude that a linear relationship between X and Y is present), we still need to be concerned about the fit of the line to the data — the relationship may be more nonlinear than linear, for example. Here are two very different situations where the slope is not equal to 0.

Example 3. Linear Slope > 0, a linear relationship between X and Y

Example 4. Linear Slope > 0, curve-linear relationship between X and Y

How can you tell the difference? There are many regression diagnostic tests, many more than we can cover, but you can start with looking at the coefficient of determination (low R2 means low fit to the line), and we can look at the pattern of residuals plotted against the either the predicted values or the X variables (my favorite). The important points are:

- In linear regression, you fit a model (the slope + intercept) to the data;

- We want the usual hypothesis tests (are the coefficients different from zero?) and

- We need to check to see if the model fits the data well. Just like in our discussions of chi-square, a “perfect fit would mean that the difference between our model and the data would be zero.

Graph options

Using residual plots to diagnose regression equations

Yes, we need to test the coefficients (intercept Ho = 0; slope Ho = 0) of a regression equation, but we also must decide if a regression is an appropriate description of the data. This topic includes the use of diagnostic tests in regression. We address this question chiefly by looking at

- scatterplots of the independent (predictor) variable(s) vs. dependent (response) variable(s).

what patterns appear between X and Y? Do your eyes tell you “Line”? “Curve”? “No relation”? - coefficient of determination

closer to zero than to one? - patterns of residuals plotted against the X variables (other types of residual plots are used to, this is one of my favorites)

Our approach is to utilize graphics along with statistical tests designed to address the assumptions.

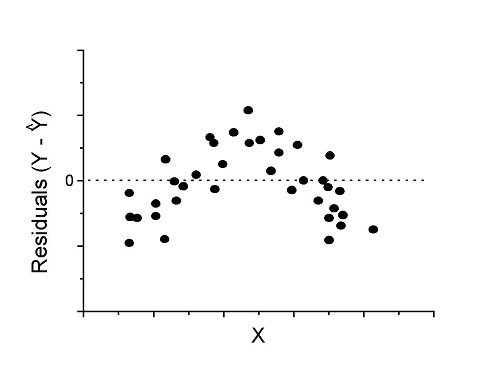

One typical choice is to see if there are patterns in the residual values plotted against the predictor variable. If the LINE assumptions hold for your data set, then the residuals should have a mean of zero with scatter about the mean. Deviations from LINE assumptions will show up in residual plots.

Here are examples of POSSIBLE outcomes:

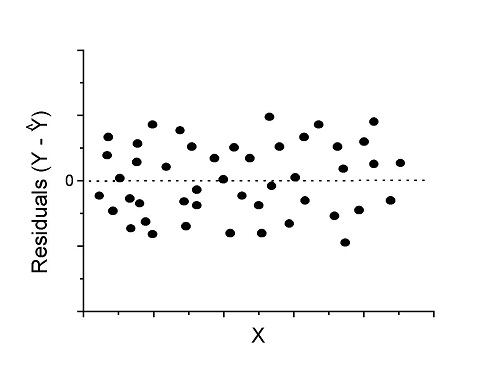

Figure 1. An ideal plot of residuals

Solution: Proceed! Assumptions of linear regression met.

Compare to plots of residuals that differ from the ideal.

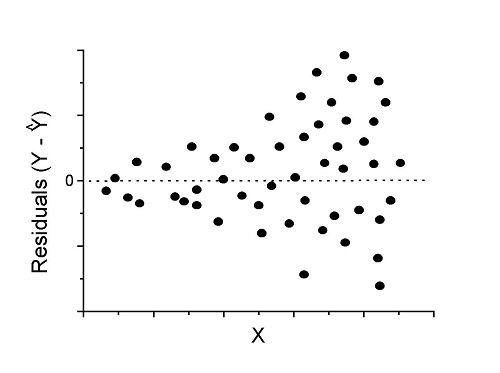

Figure 2. We have a problem. Residual plot shows a “funnel” shape — unequal variance (aka heteroscedasticity).

Solution. Try a transform like the log10-transform.

Figure 3. Problem. Residual plot shows systematic trend.

Solution. Linear model a poor fit; May be related to measurement errors for one or more predictor variables. Try adding an additional predictor variable or model the error in your general linear model.

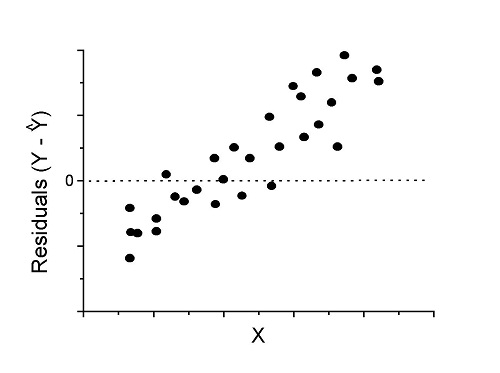

Figure 4. Problem. Residual plot shows nonlinear trend.

Solution. Transform data or use more complex model

This is a good time to mention that in statistical analyses, one often needs to do multiple rounds of analyses, involving description and plots, tests of assumptions, tests of inference. With regression, in particular, we also need to decide if our model (e.g., linear equation) is a good description of the data.

Diagnostic plot examples

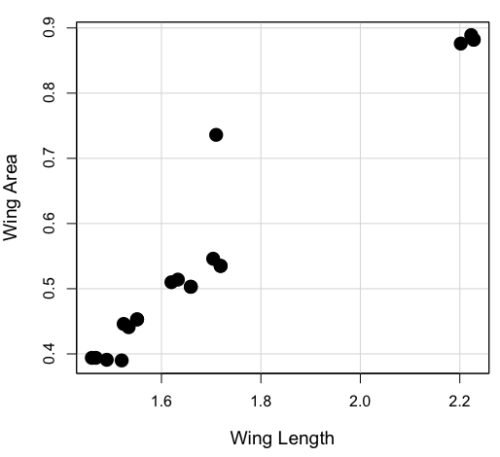

Return to our example.Tadpole dataset. To obtain residual plots, Rcmdr: Models → Graphs → Basic diagnostic plots yields four graphs. Graph A is

Figure 5. Basic diagnostic plots. A: residual plot; B: Q-Q plot of residuals; C: Scale-location (aka spread-location) plot; D: leverage residual plot.

In brief, we look at plots

A, the residual plot, to see if there are trends in the residuals. We are looking for a spread of points equally above and below the mean of zero. In Figure 5 we count seven points above and six points below zero so there’s no indication of a trend in the residuals vs the fitted VO2 (Y) values.

B, the Q-Q plot is used to see if normality holds. As discussed before, if our data are more or less normally distributed, then points will fall along a straight line in a Q-Q plot.

C, the Scale- or spread-location plot is used to verify equal variances of errors.

D, Leverage plot — looks to see if an outlier has leverage on the fit of the line to the data, i.e., changes the slope. Additionally, provides location of Cook’s distance measure (dashed red lines). Cook’s distance measures the effect on the regression by removing one point at a time and then fitting a line to the data. Points outside the dashed lines have influence.

A note of caution about over-thinking with these plots. R provides a red line to track the points. However, these lines are guides, not judges. We humans are generally good at detecting patterns, but with data visualization, there is the risk of seeing patterns where none exits. In particular, recognizing randomness is not easy. If anything, we may tend to see patterns where none exist, termed apophenia. So yes, by all means look at the graphs, but do so with a plan: red line more or less horizontal? Then no pattern and the regression model is a good fit to the data.

Statistical test options

After building linear models, run statistical diagnostic tests that compliment graphics approaches. Here, simply to introduce the tests, I included the Gosner factor as potential predictor — many of these numerical diagnostics are more appropriate for models with more than one predictor variable, the subject of the next chapter.

Results for the model ![]() were:

were:

lm(formula = VO2 ~ Body.mass + Gosner, data = frogs)

Residuals:

Min 1Q Median 3Q Max

-163.12 -125.53 -20.27 83.71 228.56

Coefficients:

Estimate Std. Error t value Pr(>|t|)

(Intercept) -595.37 239.87 -2.482 0.03487 *

Body.mass 431.20 115.15 3.745 0.00459 **

Gosner[T.II] 64.96 132.83 0.489 0.63648

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

Residual standard error: 153.4 on 9 degrees of freedom

(1 observation deleted due to missingness)

Multiple R-squared: 0.8047, Adjusted R-squared: 0.7613

F-statistic: 18.54 on 2 and 9 DF, p-value: 0.0006432

Question. Note the p-value for the coefficients — do we include a model with both body mass and Gosner Stage? See Chapter 18.5 – Selecting the best model

In R Commander, the diagnostic test options are available via

Rcmdr: Models → Numerical diagnostics

Variance inflation factors (VIF): used to detect multicollinearity among the predictor variables. If correlations are present among the predictor variables, then you can’t rely on the the coefficient estimates — whether predictor A causes change in the response variable depends on whether the correlated B predictor is also included in the model. If correlation between predictor A and B, the statistical effect is increased variance associated with the error of the coefficient estimates. There are VIF for each predictor variable. A VIF of one means there is no correlation between that predictor and the other predictor variables. A VIF of 10 is taken as evidence of serious multicollinearity in the model.

R code:

vif(RegModel.2)

R output

Body.mass Gosner

2.186347 2.186347

round(cov2cor(vcov(RegModel.2)), 3) # Correlations of parameter estimates

(Intercept) Body.mass Gosner[T.II]

(Intercept) 1.000 -0.958 0.558

Body.mass -0.958 1.000 -0.737

Gosner[T.II] 0.558 -0.737 1.000

Our interpretation

The output shows a matrix of correlations; the correlation between the two predictor variables in this model was 0.558, large per our discussions in Chapter 16.1 . Not really surprising as older tadpoles (Gosner stage II), are larger tadpoles. We’ll leave the issue of multicollinearity to Chapter 18.1.

Breusch-Pagan test for heteroscedasticity… Recall that heteroscedasticity is another name for unequal variances. This tests pertains to the residuals; we used the scale (spread) location plot (C) above. The test statistic can be calculated as ![]() . The function call is part of the car package, which we download as part of the Rcmdr package. R Commander will pop up a menu of choices for this test. For starters, accept the defaults, enter nothing, and click OK.

. The function call is part of the car package, which we download as part of the Rcmdr package. R Commander will pop up a menu of choices for this test. For starters, accept the defaults, enter nothing, and click OK.

R code:

library(zoo, pos=16) library(lmtest, pos=16) bptest(VO2 ~ Body.mass + Gosner, varformula = ~ fitted.values(RegModel.2), studentize=FALSE, data=frogs)

R output

Breusch-Pagan test data: VO2 ~ Body.mass + Gosner BP = 0.0040766, df = 1, p-value = 0.9491

Our interpretation

A p-value less than 5% would be interpreted as statistically significant unequal variances among the residuals.

Durbin-Watson for autocorrelation… used to detect serial (auto)correlation among the residuals, specifically, adjacent residuals. Substantial autocorrelation can mean Type I error for one or more model coefficients. The test returns a test statistic that ranges from 0 to 4. A value at midpoint (2) suggests no autocorrelation. Default test for autocorrelation is r > 0.

R code:

dwtest(VO2 ~ Body.mass + Gosner, alternative="greater", data=frogs)

R output

Durbin-Watson test data: VO2 ~ Body.mass + Gosner DW = 2.1336, p-value = 0.4657 alternative hypothesis: true autocorrelation is greater than 0

Our interpretation

The DW statistic was about 2. Per our definition, we conclude no correlations among the residuals.

RESET test for nonlinearity… The Ramsey Regression Equation Specification Error Test is used to interpret whether or not the equation is properly specified. The idea is that fit of the equation is compared against alternative equations that account for possible nonlinear dependencies among predictor and dependent variables. The test statistic follows an F distribution.

R code:

resettest(VO2 ~ Body.mass + Gosner, power=2:3, type="regressor", data=frogs)

R output

RESET test data: VO2 ~ Body.mass + Gosner RESET = 0.93401, df1 = 2, df2 = 7, p-value = 0.437

Our interpretation

Also a nonsignificant test — adding a power term to account for a nonlinear trend gained no model fit benefits, p-value much greater than 5%.

Bonferroni outlier test. Reports the Bonferroni p-values for testing each observation to see if it can be considered to be a mean-shift outlier (a method for identifying potential outlier values — each value is in turn replaced with the mean of its nearest neighbors, Lehhman et al 2020). Compare to results of VIF. The objective of outlier and VIF tests is to verify that the regression model is stable, insensitive to potential leverage (outlier) values.

R code:

outlierTest(LinearModel.3)

R output

No Studentized residuals with Bonferroni p < 0.05 Largest |rstudent|: rstudent unadjusted p-value Bonferroni p 7 1.958858 0.085809 NA

Our interpretation

We ran the outlierTest() function on LinearModel.3 to check for influential outliers using Studentized residuals with Bonferroni correction.

The output shows “No Studentized residuals with Bonferroni p < 0.05”, which means no data points were statistically flagged as outliers after adjusting for multiple comparisons. The largest Studentized residual was 1.96 (point 7), with an unadjusted p-value of 0.086. Although this is the largest deviation from the regression line, it is not statistically significant. Therefore, we conclude that no single observation is exerting undue influence on the model fit, and we can be confident that our regression results are not driven by outliers.

Response transformation… The concept of transformations of data to improve adherence to statistical assumptions was introduced in Chapters 3.2, 13.1, and 15.

R code:

summary(powerTransform(LinearModel.3, family="bcPower"))

R output

bcPower Transformation to Normality

Est Power Rounded Pwr Wald Lwr Bnd Wald Upr Bnd

Y1 0.9987 1 0.2755 1.7218

Likelihood ratio test that transformation parameter is equal to 0

(log transformation)

LRT df pval

LR test, lambda = (0) 7.374316 1 0.0066162

Likelihood ratio test that no transformation is needed

LRT df pval

LR test, lambda = (1) 0.00001289284 1 0.99714

Our interpretation

The Box-Cox analysis suggests that the response variable for LinearModel.3 can remain on its original scale. No power transformation was needed, and any standard linear regression inference is appropriate. The estimated transformation parameter was close to one, which suggests that the original response variable did not need a transformation. The LRT tests whether a log transformation (lambda = 0) would improve normality. The significant p-value, 0.0066, for the LRT indicates that a log transformation would not be the best choice, consistent with lambda ≈ 1. And last, the very high p-value, 0.99714, confirms that no transformation is necessary; the model residuals were close to normal.

Questions

- Write up three learning outcomes for this page. Hint: Point your favorite generative AI to this page and ask for help.

- Referring to Figures 1 – 4 on this page, which plot best suggests a regression line fits the data?

- Return to the electoral college data set and your linear models of Electoral vs. POP_2010 and POP_2019. Obtain the four basic diagnostic plots and comment on the fit of the regression line to the electoral college data.

- residual plot

- Q-Q plot

- Scale-location plot

- Leverage plot

- With respect to your answers in question 2, how well does the electoral college system reflect the principle of one person one vote?

Quiz Chapter 17.8

Assumptions and model diagnostics for Simple Linear Regression

Chapter 17 contents

- Introduction

- Simple Linear Regression

- Relationship between the slope and the correlation

- Estimation of linear regression coefficients

- OLS, RMA, and smoothing functions

- Testing regression coefficients

- Regression model fit

- Assumptions and model diagnostics for Simple Linear Regression

- References and suggested readings (Ch17 & 18)

17.7 – Regression model fit

Introduction

In Chapter 17.5 and 17.6 we introduced the example of tadpoles body size and oxygen consumption. We ran a simple linear regression, with the following output from R

RegModel.1 <- lm(VO2~Body.mass, data=example.Tadpole)

summary(RegModel.1)

Call:

lm(formula = VO2 ~ Body.mass, data = example.Tadpole)

Residuals:

Min 1Q Median 3Q Max

-202.26 -126.35 30.20 94.01 222.55

Coefficients:

Estimate Std. Error t value Pr(>|t|)

(Intercept) -583.05 163.97 -3.556 0.00451 **

Body.mass 444.95 65.89 6.753 0.0000314 ***

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

Residual standard error: 145.3 on 11 degrees of freedom

Multiple R-squared: 0.8057, Adjusted R-squared: 0.788

F-statistic: 45.61 on 1 and 11 DF, p-value: 0.00003144

You should be able to pick out the estimates of slope and intercept from the table (intercept was -583 and slope was 445). Additionally, as part of your interpretation of the model, you should be able to report how much variation in VO2 was explained by tadpole body mass (coefficient of determination, ![]() , was 0.81, which means about 81% of variation in oxygen consumption by tadpoles is explained by knowing the body mass of the tadpole.

, was 0.81, which means about 81% of variation in oxygen consumption by tadpoles is explained by knowing the body mass of the tadpole.

What’s left to do? We need to evaluate how well our model fits the data, i.e., we evaluate regression model fit. By fit we mean, how well does our model agree to the raw data? A poor fit and the model predictions are far from the raw data; a good fit, the model accurately predicts the raw data. In other words, if we draw a line through the raw data, a good fit line crosses through most of the points — a poor fit the line rarely passes through the cloud of points.

How to judge fit? This we can do by evaluating the error components relative to the portion of the model that explains the data. Additionally, we can perform a number of diagnostics of the model relative to the assumptions we made to perform linear regression. These diagnostics form the subject of Chapter 17.8. Here, we ask how well does the model,

![]()

fit the data?

Model fit statistics

The second part of fitting a model is to report how well the model fits the data. The next sections apply to this aspect of model fitting. The first area to focus on is the magnitude of the residuals: the greater the spread of residuals, the less well a fitted line explains the data.

In addition to the output from lm() function, which focuses on the coefficients, we typically generate the ANOVA table also.

Anova(RegModel.1, type="II")

Anova Table (Type II tests)

Response: VO2

Sum Sq Df F value Pr(>F)

Body.mass 962870 1 45.605 0.00003144 ***

Residuals 232245 11

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

Standard error of regression

S, the Residual Standard Error (aka Standard error of regression), is an overall measure to indicate the accuracy of the fitted line: it tells us how good the regression is in predicting the dependence of response variable on the independent variable. A large value for S indicates a poor fit. One equation for S is given by

![]()

In the above example, S = 145.3 (underlined, bold in regression output above). We can see how if ![]() is large, S will be large indicating poor fit of the linear model to the data. However, by itself S is not of much value as a diagnostic as it is difficult to know what to make of 145.3, for example. Is this a large value for S? Is it small? We don’t have any context to judge S, so additional diagnostics have been developed.

is large, S will be large indicating poor fit of the linear model to the data. However, by itself S is not of much value as a diagnostic as it is difficult to know what to make of 145.3, for example. Is this a large value for S? Is it small? We don’t have any context to judge S, so additional diagnostics have been developed.

Coefficient of determination

![]() , the coefficient of determination, is also used to describe model fit.

, the coefficient of determination, is also used to describe model fit. ![]() can take on values between 0 and 1 (0% to 100%). A good model fit has a high

can take on values between 0 and 1 (0% to 100%). A good model fit has a high ![]() value. In our example above,

value. In our example above, ![]() or 80.57%. One equation for

or 80.57%. One equation for ![]() is given by

is given by

![]()

A value of ![]() close to 1 means that the regression “explains” nearly all of the variation in the response variable, and would indicate the model is a good fit to the data. As we mentioned in Chapter 17.2, the coefficient of determination,

close to 1 means that the regression “explains” nearly all of the variation in the response variable, and would indicate the model is a good fit to the data. As we mentioned in Chapter 17.2, the coefficient of determination, ![]() , is the squared value of r, the product moment correlation.

, is the squared value of r, the product moment correlation.

Adjusted R-squared

Before moving on we need to remark on the difference between ![]() and adjusted

and adjusted ![]() . For Simple Linear Regression there is but one predictor variable, X; for multiple regression there can be many additional predictor variables. Without some correction,

. For Simple Linear Regression there is but one predictor variable, X; for multiple regression there can be many additional predictor variables. Without some correction, ![]() will increase with each additional predictor variables. This doesn’t mean the model is more useful, however, and in particular, one cannot compare

will increase with each additional predictor variables. This doesn’t mean the model is more useful, however, and in particular, one cannot compare ![]() between models with different numbers of predictors. Therefore, an adjustment is used so that the coefficient of determination remains a useful way to assess how reliable a model is and to permit comparisons of models. Thus, we have the Adjusted

between models with different numbers of predictors. Therefore, an adjustment is used so that the coefficient of determination remains a useful way to assess how reliable a model is and to permit comparisons of models. Thus, we have the Adjusted ![]() , which is calculated as

, which is calculated as

![]()

In our example above, Adjusted R 2 = 0.3806 or 38.06%.

Which should you report? Adjusted ![]() ,because it is independent of the number of parameters in the model.

,because it is independent of the number of parameters in the model.

Both ![]() and S are useful for regression diagnostics, a topic which we will discuss next (Chapter 17.8).

and S are useful for regression diagnostics, a topic which we will discuss next (Chapter 17.8).

Questions

- Write up three learning outcomes for this page. Hint: Point your favorite generative AI to this page and ask for help.

- As you may know, the US census is used to reapportion congress and also to determine the number of electoral college votes. In honor of the election for US President that’s just days away, in the next series of questions in this Chapter and subsequent sections of Chapter 17 and 18, I’ll ask you to conduct a regression analysis on the electoral college. For starters, make the regression of Electoral votes on 2010 census population. (Ignore for now the other columns, just focus on POP_2019 and Electoral.) Report the

- regression coefficients (slope, intercept)

- percent of the variation in electoral college votes explained by the regression (

).

).

- Make a scatterplot and add the regression line to the plot

Quiz Chapter 17.7

Regression model fit

Data set

USA population year 2010 and year 2019 with Electoral College counts

| State | Region | Division | POP_2010 | POP_2019 | Electoral |

|---|---|---|---|---|---|

| Alabama | South | East South Central | 4779736 | 4903185 | 9 |

| Alaska | West | Pacific | 710231 | 731545 | 3 |

| Arizona | West | Mountain | 6392017 | 7278717 | 11 |

| Arkansas | South | West South Central | 2915918 | 3017804 | 6 |

| California | West | Pacific | 37253956 | 39512223 | 55 |

| Colorado | West | Mountain | 5029196 | 5758736 | 9 |

| Connecticut | Northeast | New England | 3574097 | 3565287 | 7 |

| Delaware | South | South Atlantic | 897934 | 982895 | 3 |

| District of Columbia | South | South Atlantic | 601723 | 705749 | 3 |

| Florida | South | South Atlantic | 18801310 | 21477737 | 29 |

| Georgia | South | South Atlantic | 9687653 | 10617423 | 16 |

| Hawaii | West | Pacific | 1360301 | 1415872 | 4 |

| Idaho | West | Mountain | 1567582 | 1787065 | 4 |

| Illinois | Midwest | East North Central | 12830632 | 12671821 | 20 |

| Indiana | Midwest | East North Central | 6483802 | 6732219 | 11 |

| Iowa | Midwest | West North Central | 3046355 | 3155070 | 6 |

| Kansas | Midwest | West North Central | 2853118 | 2913314 | 6 |

| Kentucky | South | East South Central | 4339367 | 4467673 | 8 |

| Louisiana | South | West South Central | 4533372 | 4648794 | 8 |

| Maine | Northeast | New England | 1328361 | 1344212 | 4 |

| Maryland | South | South Atlantic | 5773552 | 6045680 | 10 |

| Massachusetts | Northeast | New England | 6547629 | 6892503 | 11 |

| Michigan | Midwest | East North Central | 9883640 | 9883635 | 16 |

| Minnesota | Midwest | West North Central | 5303925 | 5639632 | 10 |

| Mississippi | South | East South Central | 2967297 | 2976149 | 6 |

| Missouri | Midwest | West North Central | 5988927 | 6137428 | 10 |

| Montana | West | Mountain | 989415 | 1068778 | 3 |

| Nebraska | Midwest | West North Central | 1826341 | 1934408 | 5 |

| Nevada | West | Mountain | 2700551 | 3080156 | 6 |

| New Hampshire | Northeast | New England | 1316470 | 1359711 | 4 |

| New Jersey | Northeast | Mid-Atlantic | 8791894 | 8882190 | 14 |

| New Mexico | West | Mountain | 2059179 | 2096829 | 5 |

| New York | Northeast | Mid-Atlantic | 19378102 | 19453561 | 29 |

| North Carolina | South | South Atlantic | 9535483 | 10488084 | 15 |

| North Dakota | Midwest | West North Central | 672591 | 762062 | 3 |

| Ohio | Midwest | East North Central | 11536504 | 11689100 | 18 |

| Oklahoma | South | West South Central | 3751351 | 3956971 | 7 |

| Oregon | West | Pacific | 3831074 | 4217737 | 7 |

| Pennsylvania | Northeast | Mid-Atlantic | 12702379 | 12801989 | 20 |

| Rhode Island | Northeast | New-England | 1052567 | 1059361 | 4 |

| South Carolina | South | South-Atlantic | 4625364 | 5148714 | 9 |

| South Dakota | Midwest | West-North-Central | 814180 | 884659 | 3 |

| Tennessee | South | East-South-Central | 6346105 | 6829174 | 11 |

| Texas | South | West-South-Central | 25145561 | 28995881 | 38 |

| Utah | West | Mountain | 2763885 | 3205958 | 6 |

| Vermont | Northeast | New-England | 625741 | 623989 | 3 |

| Virginia | South | South-Atlantic | 8001024 | 8535519 | 13 |

| Washington | West | Pacific | 6724540 | 7614893 | 12 |

| West Virginia | South | South-Atlantic | 1852994 | 1792147 | 5 |

| Wisconsin | Midwest | East-North-Central | 5686986 | 5822434 | 10 |

| Wyoming | West | Mountain | 563626 | 578759 | 3 |

Chapter 17 contents

- Introduction

- Simple Linear Regression

- Relationship between the slope and the correlation

- Estimation of linear regression coefficients

- OLS, RMA, and smoothing functions

- Testing regression coefficients

- ANCOVA – Analysis of covariance

- Regression model fit

- Assumptions and model diagnostics for Simple Linear Regression

- References and suggested readings (Ch17 & 18)

17.5 – Testing regression coefficients

Introduction

Whether the goal is to create a predictive model or an explanatory model, then there are two related questions the analyst asks about the linear regression model fitted to the data:

- Does a line actually fit the data

- Is the linear regression statistically significant?

We will turn to the first question pertaining to fit in time, but for now, focus on the second question.

Like the one-way ANOVA, we have the null and alternative hypothesis for the regression model itself. We write our hypotheses for the regression

H0 : linear regression fits the data vs. HA : linear regression does not fit

We see from the output in R and Rcmdr that an ANOVA table has been provided. Thus, the test of the regression is analogous to an ANOVA — we partition the overall variability in the response variable into two parts: the first part is the part of the variation that can be explained by there being a linear regression (the linear regression sum of squares) plus a second part that accounts for the rest of the variation in the data that is not explained by the regression (the residual or error sum of squares). Thus, we have

![]()

As we did in ANOVA, we calculate Mean Squares ![]() , where x refers to either “regression” or “residual” sums of squares and degrees of freedom. We then calculate F-values, the test statistics for the regression, to test the null hypothesis.

, where x refers to either “regression” or “residual” sums of squares and degrees of freedom. We then calculate F-values, the test statistics for the regression, to test the null hypothesis.

The degrees of freedom (DF) in simple linear regression are always

![]()

where

![]()

and FDFregression, DFresidual are compared to the critical value at Type I error rate α, with DFregression, DFresidual.

Linear regression inference

Estimation of the slope and intercept is a first step and should be accompanied by the calculation of confidence intervals.

What we need to know if we are to conclude that there’s a functional relationship between the X and Y variable is whether the same relationship exists in the population. We’ve sampled from the population, calculated an equation to describe the relationship between them. However, just as in all cases of inferential statistics, we need to consider the possibility that, through chance alone, we may have committed a Type I error.

The graph below (Fig 1) shows a possible outcome under a scenario in which the statistical analyst would likely conclude that there is a statistically significant linear model fit to the data, but the true relationship in the population was a slope of zero. How can this happen? Under this scenario, by chance alone the researchers sampled points (circled in red) from the population that fall along a line. We will conclude that there is linear relationship — that’s what are inferential statistics work would indicate — but there was none in the population from which the sampling was done; there would be no way for us to recognize the error except to repeat the experiment — the principal of research reproducibility — with a different sample.

Figure 1. Scatterplot of hypothetical x,y data for which the researcher may obtain a statistically significant linear fit to sample of data from population in which null hypothesis is true relationship between x and y.

So in conclusion, you must keep in mind the meaning of statistical significance in the context of statistical inference: it is inference done on a background of random chance, the chance that sampling from the population leads to a biased sample of subjects.

Note 1. If you think about what I did with this data for Figure 1 graph, purposely selecting data that showed a linear relationship between Y and X (red circles), then you should recognize this as an example of data dredging or p-hacking, cf. discussion in Head et al (2015); Stefan and Schönbrodt (2023). However, the graph is supposed to be read as if we could do a census and therefore have full knowledge of the true relationship between y and x. The red circles indicate the chance that sampling from a population may sometimes yield incorrect conclusions.

Tests of coefficients

One criterion for a good model is that the coefficients in the model, the intercept and the slope(s) are all statistically significant.

For the statistical of the slope, b1, we generally treat the test as a two-tailed test of the null hypothesis that the regression slope is equal to zero.

H0 : b1 = 0 vs. HA : b1 ≠ 0

Similarly, for the statistical of the intercept, b0, we generally treat the test as a two-tailed test of the null hypothesis that the Y-intercept is equal to zero.

H0 : b0 = 0 vs. HA : b0 ≠ 0

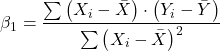

For both slope and intercept we use t-statistics.

![]()

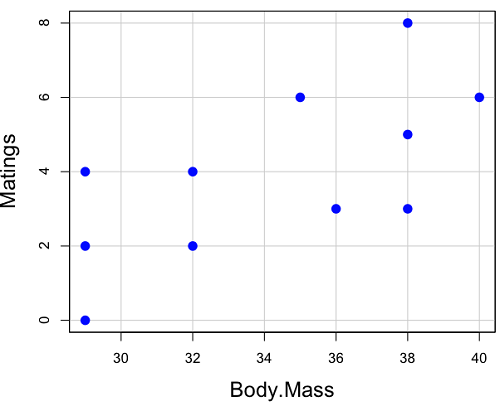

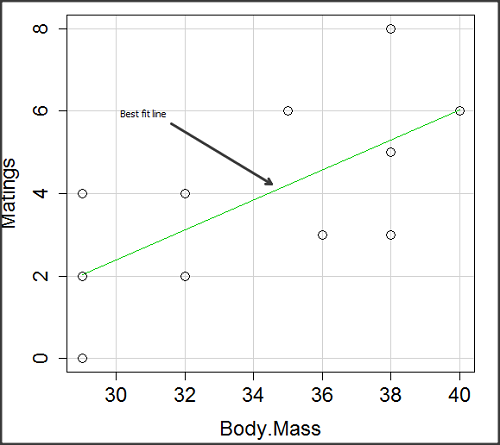

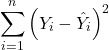

We’ll illustrate the tests of the slope and intercept by letting R and Rcmdr do the work. You’ll find this simple data set at the bottom of this page (scroll or click here). The first variable is the number of matings, the second is the size of the paired female, and the third is the size of the paired male. All body mass were in grams.

R code

After loading the worksheet into R and Rcmdr, begin by selecting

Rcmdr: Statistics → Fit model → Linear Regression

Note that more than one predictor can be entered, but only one response (dependent) variable may be selected (Fig 2).

Figure 2. Screenshot linear regression menu. More than explanatory (predictor or independent) variables may be selected, but only one response (dependent) variable may be selected.

This procedure will handle simple and multiple regression problems. But before we go further, answer these two questions for the data set.

Question 1. What is the Response variable?

Question 2. What is the Explanatory variable?

Answers. See below in the R output to see if you were correct!

If there is only one predictor, then this is a Simple Linear Regression; if more than one predictor is entered, then this is a Multiple Linear Regression. We’ll get some more detail, but for now, identify and evaluate the test (p-value) of the slope coefficient (labeled after the name of the predictor variable), the test of the intercept coefficient, and some new stuff.

R output

RegModel.1 <- lm(Matings ~ Female, data=birds)

summary(RegModel.1)

Call: lm(formula = Matings ~ Female, data = birds)

Residuals:

Min 1Q Median 3Q Max

-2.32805 -1.59407 -0.04359 1.77292 2.67195

Coefficients:

Estimate Std. Error t value Pr(>|t|)

(Intercept) -8.6175 3.5323 -2.440 0.02528 *

Female 0.3670 0.1042 3.524 0.00243 **

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

Residual standard error: 1.774 on 18 degrees of freedom

Multiple R-squared: 0.4082, Adjusted R-squared: 0.3753 F-statistic: 12.42 on 1 and 18 DF, p-value: 0.002427

In this example, slope = 0.367 and the intercept = -8.618. The first new term we encounter is called Multiple R-squared (R2) — it’s also called the coefficient of determination. It’s the ratio of the sum of squares due to the regression to the total sums of squares. R2 ranges from zero to 1, with a value of 1 indicating a perfect fit of the regression to the data.

Note 2. If you are looking for a link between correlation and simple linear regression, then here it is: R2 is the square of the product-moment correlation, r. Thus, r = √R2 see Chapter 17.2).

We introduced ![]() in Chapter 17.1 . Interpretation of R2 goes like this: If R2 is close to zero, then the regression model does not explain much of the variation in the dependent variable; conversely, if R2 is close to one, then the regression model explains a lot of the variation in the dependent variable.

in Chapter 17.1 . Interpretation of R2 goes like this: If R2 is close to zero, then the regression model does not explain much of the variation in the dependent variable; conversely, if R2 is close to one, then the regression model explains a lot of the variation in the dependent variable.

Did you get the Answers?

Answer 1: Number of matings

Answer 2: Size of females

Interpreting the output

Recall that

![]()

then

![]()

From the output we see that R2 was 0.408, which means that about 40% of the variation in numbers of matings may be explained by size of the females alone.

To complete the analysis get the ANOVA table for the regression.

Rcmdr: Models → Hypothesis tests → ANOVA table…

> Anova(RegModel.1, type="II")

Anova Table (Type II tests)

Sum Sq Df F value Pr(>F)

Female 39.084 1 12.415 0.002427 **

Residuals 56.666 18

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

With only one predictor (explanatory) variable, note that the regression test in the ANOVA is the same (has the same probability vs. the null hypothesis) as the test of the slope.

Test two slopes

A more general test of the null hypothesis involving the slope might be

H0 : b1 = b vs. HA : b1 ≠ b

where b can be any value, including zero. Again, t-test would then be used to conduct the test of the slope, where the t-test would have the form

![]()

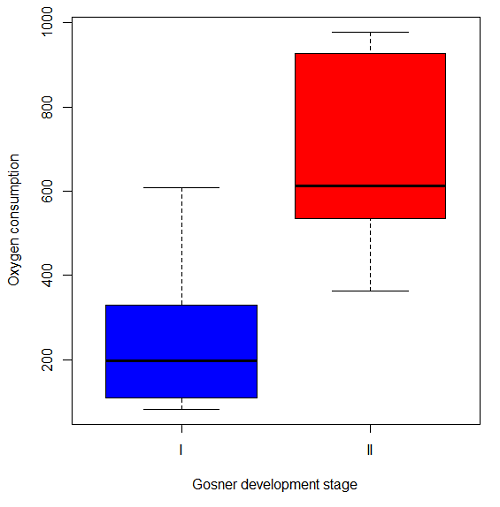

where SEb1-b2 is the standard error of the difference between the two regression coefficients. We saw something similar to this value back when we did a paired t-test. To obtain sb1–b2, we need the pooled residual mean square and the squared sums of the X values for each of our sample.

First, the pooled (hence the subscript p) residual mean square is calculated as

![]()

where resSS and resDF refer to residual sums of squares and residual degrees of freedom for the first (1) and second (2) regression equations.

Second, the standard error of the difference between regression coefficients (squared!!) is calculated as

where the subscript “1” and “2” refer to the X values from the first sample (eg, the body size values for the males) and the second sample (eg, the body size values for the females).

Note 3. To obtain the squared sum in R and Rcmdr, use the Calc function (eg, two sum the squared X values for the females, use SUM(‘Female’*’Female’)).

We can then use our t-test, with the degrees of freedom now

![]()

Alternatively, the t-test of two slopes can be written as

![]()

with again ![]() .

.

In this way, we can see a way to test any two slopes for equality. This would be useful if we wanted to compare two samples (eg, males and females) and wanted to see if the regressions were the same (eg, metabolic rate covaried with body mass in the same way — that is, the slope of the relationship was the same). This situation arises frequently in biology. For example, we might want to know if male and female birds have different mean field metabolic rates, in which case we might be tempted to use a one-way ANOVA or t-test (since one factor with two levels). However, if males and females also differ for body size, then any differences we might see in metabolic rate could be due to differences in metabolic rate or to differences in the covariable body size. The test is generally referred to as the analysis of covariance (ANCOVA), which is the subject for Chapter 17.6. In brief, ANCOVA allows you to test for mean differences in traits like metabolic rate between two or more groups, but after first accounting for covariation due to another variable (eg, body size). However, ANCOVA makes the assumption that relationship between the covariable and the response variable is the same in the two groups. This is the same as saying that the regression slopes are the same. Let’s proceed to see how we can compare regression slopes, then move to a more general treatment in Chapter 17.6.

Example

For a sample of 13 tadpoles (Rana pipiens), hatched in the laboratory, oxygen consumption at rest was recorded with a YSI meter, model 51B, and a YSI oxygen – temperature probe (Yellow Spring Instruments Co., Yellow Springs, Ohio). Individual tadpoles were placed into a mason jar (volume 885 ml), with a magnetic stir bar housed in a slotted Petri dish glued to the bottom of the jar to gently stir the water. Water samples from the aquarium were used and petroleum jelly served as a sealant once the vessel had been fitted with the probe. Oxygen consumption was recorded in ppm and ![]() values (ml O2 STP · hr-1). The tadpoles were scored for developmental stage by Gosner’s (1960) criteria. The tadpoles ranged from Gosner stage 35 to 44.

values (ml O2 STP · hr-1). The tadpoles were scored for developmental stage by Gosner’s (1960) criteria. The tadpoles ranged from Gosner stage 35 to 44.

Note 4. Gosner stages 1 through 25 include the embryonic stages; By stage 31, toes develop on the limb buds; Following stage 40, substantial body changes are observed in the growing tadpole, transitioning from tailed tadpole to tail-less adult form. Complete metamorphosis is assigned Gosner stage 45.

Figure 3. Pearson Scott Foresman, Public domain, via Wikimedia Commons

The question we addressed: metamorphosis of Anuran tadpoles to adults (frogs) likely involves increased metabolic demands, demands that may exceed simple allometric predictions based on increase in body size during development. Thus, we predicted that mass-specific metabolic rate for metamorphic frogs would exceed mass-specific metabolism of pre-metamorphic frogs.

M. Dohm unpublished results from my undergraduate days (1986!), you’ll find this simple data set at the bottom of this page (scroll or click here). For simplicity, we grouped all tadpoles between stages 35 and 38 as Stage I and all tadpoles between 39 and 44 as stage II. No tadpoles who completed metamorphosis were included in the dataset. It shouldn’t surprise you — with such a small sample size, the project lacked power to test the hypothesis, but many such studies, it’s worth looking at in the case anyway because the prevailing sentiment is that the metabolic cost of metamorphosis is significant — in other words, we expect a large effect size — and remains an ongoing research effort (see Burraco et al 2019).

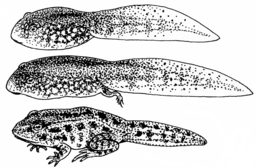

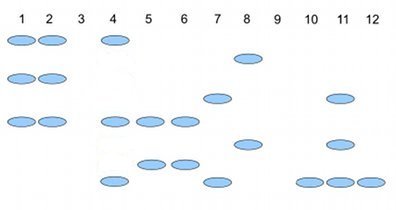

You should confirm your self, but the slope of the regression equation of oxygen consumption (ml O2/hour) on body mass (g) was 444.95 (with SE = 65.89). Plot of data shown in Figure 4.

Figure 4. Scatterplot of oxygen consumption by tadpoles (blue: Gosner developmental stage I [35 – 38]; red: Gosner developmental stage II [39 – 44]), vs body mass (g).

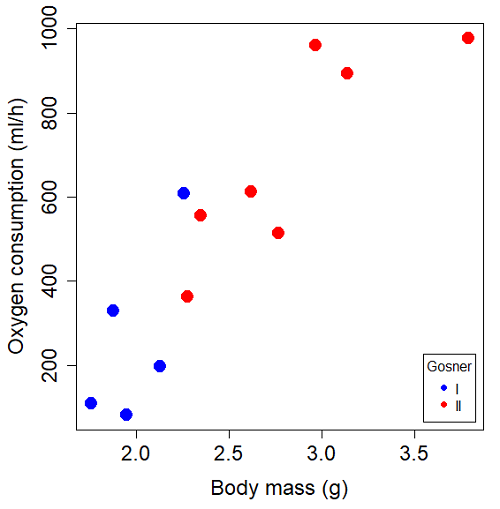

The project looked at whether metabolism as measured by oxygen consumption was consistent across two developmental stages. Metamorphosis in frogs and other amphibians represents profound reorganization of the organism as the tadpole moves from water to air. Thus, we would predict some cost as evidenced by change in metabolism associated with later stages of development. Figure 5 shows a box plot of tadpole oxygen consumption by Gosner (1960) developmental stage.

Figure 5. Boxplot of oxygen consumption by Gosner developmental stages (blue: stage I; red: stage 2).

Looking at Figure 4 we see a trend consistent with our prediction; developmental stage may be associated with increased metabolism. However, older tadpoles also tend to be larger, and the plot in Figure 4 does not account for that. Thus, body mass is a confounding variable in this example. There are several options for analysis here (eg, ANCOVA), but one way to view this is to compare the slopes for the two developmental stages. While this test does not compare the means, it does ask a related question: is there evidence of change in rate of oxygen consumption relative to body size between the two developmental stages? The assumption that the slopes are equal is a necessary step for conducting the ANCOVA, which we describe in Chapter 17.6.

So, divide the data set into two groups by developmental stage (12 tadpoles could be assigned to one of two developmental stages; one tadpole was at a lower Gosner stage than the others and so is dropped from the subset).

Gosner stage I (stages 35 – 39)

| Body mass | |

| 1.76 | 109.41 |

| 1.88 | 329.06 |

| 1.95 | 82.35 |

| 2.13 | 198 |

| 2.26 | 607.7 |

Gosner stage II (stage 40 – 44)

| Body mass | |

| 2.28 | 362.71 |

| 2.35 | 556.6 |

| 2.62 | 612.93 |

| 2.77 | 514.02 |

| 2.97 | 961.01 |

| 3.14 | 892.41 |

| 3.79 | 976.97 |

The slopes and standard errors were

| Gosner Stage I | Gosner stage II | |

| slope | 750.0 | 399.9 |

| standard error of slope | 444.6 | 111.2 |

Are the two slopes equal?

Looking at the table, we would say, no. slopes look different (750 vs 399.9). However, the errors are large and, given this is a small data set, we need to test statistically; are the slopes indistinguishable (Ho: bI = bII), where bI is the slope for the Gosner Stage I subset and bII is the slope for the Gosner Stage II subset?

To use our tests discussed above, we need sum of squared X values for Gosner Stage I and sum of squared for Gosner stage II results. We can get these from the ANOVA tables. Recall that after applying

ANOVA regression Gosner Stage I, R work

Anova(gosI.1, type="II")

Anova Table (Type II tests)

Response: VO2

Sum Sq Df F value Pr(>F)

Body.mass 89385 1 2.8461 0.1902

Residuals 94220 3

ANOVA regression Gosner Stage II

Anova(gosII.1, type="II")

Anova Table (Type II tests)

Response: VO2

Sum Sq Df F value Pr(>F)

Body.mass 258398 1 12.935 0.0156 *

Residuals 99882 5

Rcmdr: Models – Compare model coefficients..

compareCoefs(gosI.1, gosII.1)

Calls:

1: lm(formula = VO2 ~ Body.mass, data = gosI)

2: lm(formula = VO2 ~ Body.mass, data = gosII)

Model 1 Model 2

(Intercept) -1232 -441

SE 891 321

Body.mass 750 400

SE 445 111

SSgosnerI = 89385

SSgosnerII = 258398

We also need the residual Mean Squares (SSresid/DF resid) from the ANOVA tables

MSgosnerI = 94220/3 = 31406.67

MSgosnerII = 99882/5 = 19976.4

[under construction] Therefore, the pooled residual MS (s2x.y)p = nnn and the pooled SE of the difference sb1-b2 = nnn using the formulas above.

Now, we plug in the values I can get a t-test = (750 – 444.6)/nnn = nnn

The DF for this t-test are n1 + n2 – 4 = 4 + 6 – 4 = 6.

Using Table of Student’s t distribution (Appendix), I find the two-tailed critical value for t at alpha = 5% with DF = 6 is equal to 3.758. Since nn >> 3.758, we conclude that the two slopes are statistically different and P < 0.001 that the null hypothesis (Ho: bw = ball) was true. (edit: fix nn).

Questions

1. Write up three learning outcomes for this page. Hint: Point your favorite generative AI to this page and ask for help.

2. Metabolic rates like oxygen consumption over time are well-known examples of allometric relationships. That is, many biological variables (eg, ![]() is related as a · Mb, where M is body mass, b is scaling exponent (the slope!), and a is a constant (the intercept!)), and best evaluated on log-log scale. Redo the linear regression of oxygen consumption vs. body mass for the tadpoles, but this time, apply log10-transform to

is related as a · Mb, where M is body mass, b is scaling exponent (the slope!), and a is a constant (the intercept!)), and best evaluated on log-log scale. Redo the linear regression of oxygen consumption vs. body mass for the tadpoles, but this time, apply log10-transform to VO2 and to Body.mass. For example

Data in this page, bird matings

| Body.Mass | Matings |

|---|---|

| 29 | 0 |

| 29 | 2 |

| 29 | 4 |

| 32 | 4 |

| 32 | 2 |

| 35 | 6 |

| 36 | 3 |

| 38 | 3 |

| 38 | 5 |

| 38 | 8 |

| 40 | 6 |

Data in this page, Oxygen consumption, ![]() , of Anuran tadpoles

, of Anuran tadpoles

| Gosner | Body mass | VO2 |

|---|---|---|

| NA | 1.46 | 170.91 |

| I | 1.76 | 109.41 |

| I | 1.88 | 329.06 |

| I | 1.95 | 82.35 |

| I | 2.13 | 198 |

| II | 2.28 | 362.71 |

| I | 2.26 | 607.7 |

| II | 2.35 | 556.6 |

| II | 2.62 | 612.93 |

| II | 2.77 | 514.02 |

| II | 2.97 | 961.01 |

| II | 3.14 | 892.41 |

Gosner refers to Gosner (1960), who developed a criteria for judging metamorphosis staging.

Quiz Chapter 17.5

Testing regression coefficients

Chapter 17 contents

- Introduction

- Simple Linear Regression

- Relationship between the slope and the correlation

- Estimation of linear regression coefficients

- OLS, RMA, and smoothing functions

- Testing regression coefficients

- ANCOVA – Analysis of covariance

- Regression model fit

- Assumptions and model diagnostics for Simple Linear Regression

- References and suggested readings (Ch17 & 18)

17.4 – OLS, RMA, and smoothing functions

Introduction

OLS or ordinary least squares is the most commonly used estimation procedure for fitting a line to the data. For both simple and multiple regression, OLS works by minimizing the sum of the squared residuals. OLS is appropriate when the linear regression assumptions LINE apply. In addition, further restrictions apply to OLS including that the predictor variables are fixed and without error. OLS is appropriate when the goal of the analysis is to retrieve a predictive model. OLS describes an asymmetric association between the predictor and the response variable: the slope bX for Y ~ bXX will generally not be the same as the slope bY for X ~ bYY.

OLS is appropriate for assessing functional relationships (i.e., inference about the coefficients) as long as the assumptions hold. In some literature, OLS is referred to as a Model I regression.

Generalized Least Squares

Generalized linear regression is an estimation procedure related to OLS but can be used either when variances are unequal or multicollinearity is present among the error terms.

Weighted Least Squares

A conceptually straightforward extension of OLS can be made to account for situation where the variances in the error terms are not equal. If the variance of Yi varies for each Xi, then a weighting function based on the reciprocal of the estimated variance may be used.

![]()

then, instead of minimizing the squared residuals as in OLS, the regression equation estimates in weighted least squares minimizes the squared residuals summed over the weights.

![]()

Weighted least squares is a form of generalized least squares. In order to estimate wi, however, multiple values of Y for each observed X must be available.

Reduced Major Axis